The 2026 Blueprint for AI Agents in Google Cloud: Orchestrating the Next Era of Autonomous Enterprise Workflows

The enterprise landscape has reached a critical point in 2026, transitioning from the era of "simple prompts" to what we at happtiq define as the "agent leap". For technical architects and IT decision-makers, the challenge now lies in building autonomous and semi-autonomous systems capable of planning, reasoning, and acting across complex software environments to deliver specific business outcomes. As a Google Cloud Premier Partner, we are seeing a fundamental shift where AI agents are moving from experimental side-projects to the very core of digital assembly lines that run entire workflows 24/7 at scale.

This blog serves as a detailed technical insight on the current state of AI Agents in Google Cloud. Whether you are evaluating the no-code capabilities of Gemini Enterprise, the low-code flexibility of Vertex AI Agent Builder, or the high-code precision of the Agent Development Kit (ADK), understanding the interoperability of these tools is essential for maintaining a competitive edge. In the following sections, we will discuss the architectural details, security guardrails, and communication protocols that define the 2026 agentic ecosystem, providing you with the insights needed to move beyond chatbots and toward a fully orchestrated digital workforce.

Why is 2026 the Year of the Agents?

Achieving the full potential of the AI agents requires a cultural shift in how we perceive the relationship between human strategy and machine execution. In 2026, the data makes this clearer: 88% of agentic AI early adopters are already seeing a positive ROI on at least one generative AI use case. At the heart of this success is the transition from single-task automation to multi-agent systems where specialized agents collaborate on complex workflows like customer service resolution, supply-chain optimization, and software development.

The shift is fundamental: instead of employees performing the steps, they are now setting the strategy and overseeing a system of agents responsible for the execution. This mindset shift recognizes AI agents as always-available productivity instruments. To support this, Google Cloud has unified its offerings into three primary pillars: Gemini Enterprise for universal access, Vertex AI Agent Builder for customized creation, and the Agent Engine for managed execution. Let’s start with Gemini Enterprise.

Pillar I: Gemini Enterprise – The Autonomous Employee Portal

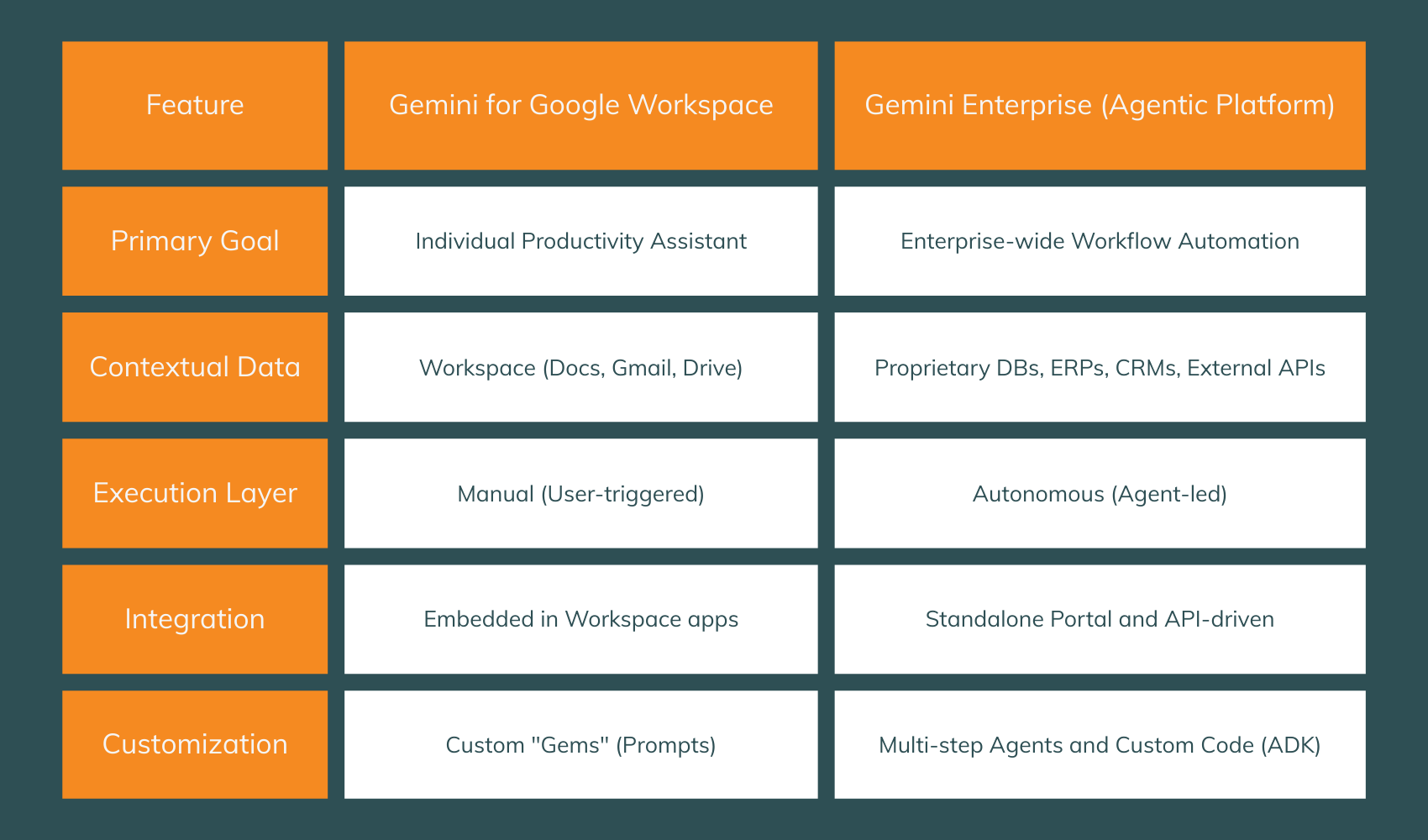

Gemini Enterprise has evolved into a standalone agentic platform that functions as the central "AI nervous system" for the modern organization. Unlike the standard Gemini for Google Workspace, which acts as an in-app sidekick, Gemini Enterprise is designed to synthesize data from proprietary internal systems and third-party sources to automate business-wide processes.

Distinguishing the Enterprise Tiers

Gemini Enterprise allows teams to discover, create, and share agents in a secure marketplace. This means a finance expert can build a no-code agent for invoice reconciliation and publish it so the entire accounting department can leverage that expertise as a shared automation.

Insider tip: we’re already sharing gems internally at happtiq!

Pillar II: Vertex AI Agent Builder – The Developer’s Workbench

Vertex AI Agent Builder, on the other hand, is the unified platform for building, scaling, and governing AI agents. It provides a full-stack foundation that handles the entire lifecycle, from prototyping in the Agent Garden to deployment in the Agent Engine.

Navigating the Agent Garden

The Agent Garden serves as the starting point for most development cycles. It is a repository that aggregates curated samples and tools to get you started.

Prebuilt Agents: These are end-to-end solutions for specific domains like HR onboarding, inventory management, or technical support. You can deploy these with one click and then customize them to your data.

Modular Tools: If you are building a custom agent using the ADK, the Garden provides individual components for database interaction, web search, and code execution.

Specialized Solutions: Beyond general LLMs, the Garden offers access to specialized models which can be integrated into agentic workflows with minimal setup.

The Low-Code Visual Designer

For teams that need more than a template but less than a full Python stack, the Agent Designer (currently in Preview as of January 2026) provides a visual interface for designing and testing agents directly in the console. This tool allows you to define "Playbooks", natural language instructions that guide agent behavior, eliminating the need for rigid decision trees.

Pillar III: The Agent Development Kit (ADK) – High-Code Orchestration

For the architects and engineers reading this, the Agent Development Kit (ADK) is the most critical tool in the shed. It is a flexible, modular framework designed to make agent development feel like standard software engineering. While optimized for the Gemini 3 Pro and Flash models, it remains model-agnostic and deployment-agnostic.

Core Agent Types in ADK

The ADK classifies agents based on how they process information and manage state:

LLM Agents: The "thinkers." They use models like Gemini 3 to reason through natural language and decide which tools to call.

Workflow Agents: The "managers." These are deterministic agents that follow strict logic (Sequence, Parallel, or Loop) without using an LLM to decide the path. They are essential for high-stakes, predictable processes.

Custom Agents: The "specialists." When a standard pattern doesn't fit, you can inherit from BaseAgent and write custom Python (or Go/Java) logic.

Orchestration Patterns: Building Digital Assembly Lines

One of the primary benefits of the ADK is the ability to create multi-agent systems (MAS) through decentralized control and emergent behavior. Rather than one "boss" agent, the ADK uses parent-child relationships to delegate tasks.

The Sequential Pipeline

This pattern is ideal for data processing where each step depends on the previous one. For example, a code development pipeline:

Python

# --- 1. Define Sub-Agents for Each Pipeline Stage ---

code_writer = LlmAgent(

name="CodeWriter",

model="gemini-3-flash",

instruction="Generate Python code based on the spec.",

output_key="raw_code"

)

code_reviewer = LlmAgent(

name="CodeReviewer",

model="gemini-3-pro",

instruction="Review the code in {raw_code} for PEP8 compliance.",

output_key="review_feedback"

)

# --- 2. Orchestrate the Sequence ---

pipeline_root = SequentialAgent(

name="DevPipeline",

sub_agents=[code_writer, code_reviewer]

)

The Coordinator (Dispatcher)

In this pattern, a top-level agent analyzes the user's intent and routes it to a specialist. In a retail setting, a "Coordinator" might route a query to a "Billing Specialist" or a "Technical Support" agent based on the user's request.

Session and Memory Management

Effective agents must maintain context over time. The ADK provides built-in services for Sessions and Memory Banks.

Sessions: Ensure continuity during a single interaction. The ADK handles the state, allowing agents to remember what was discussed three turns ago.

Memory Bank: A long-term storage layer where agents can persist user preferences (e.g., "I prefer concise explanations") across different sessions, enabling personalized experiences that improve over time.

Gemini 3: The Engine of Agentic Reasoning

The underlying power of these agents today comes from the Gemini 3 model series. Gemini 3 Pro is specifically designed to be a "core orchestrator" for advanced workflows, mastering autonomous coding and complex multimodal tasks.

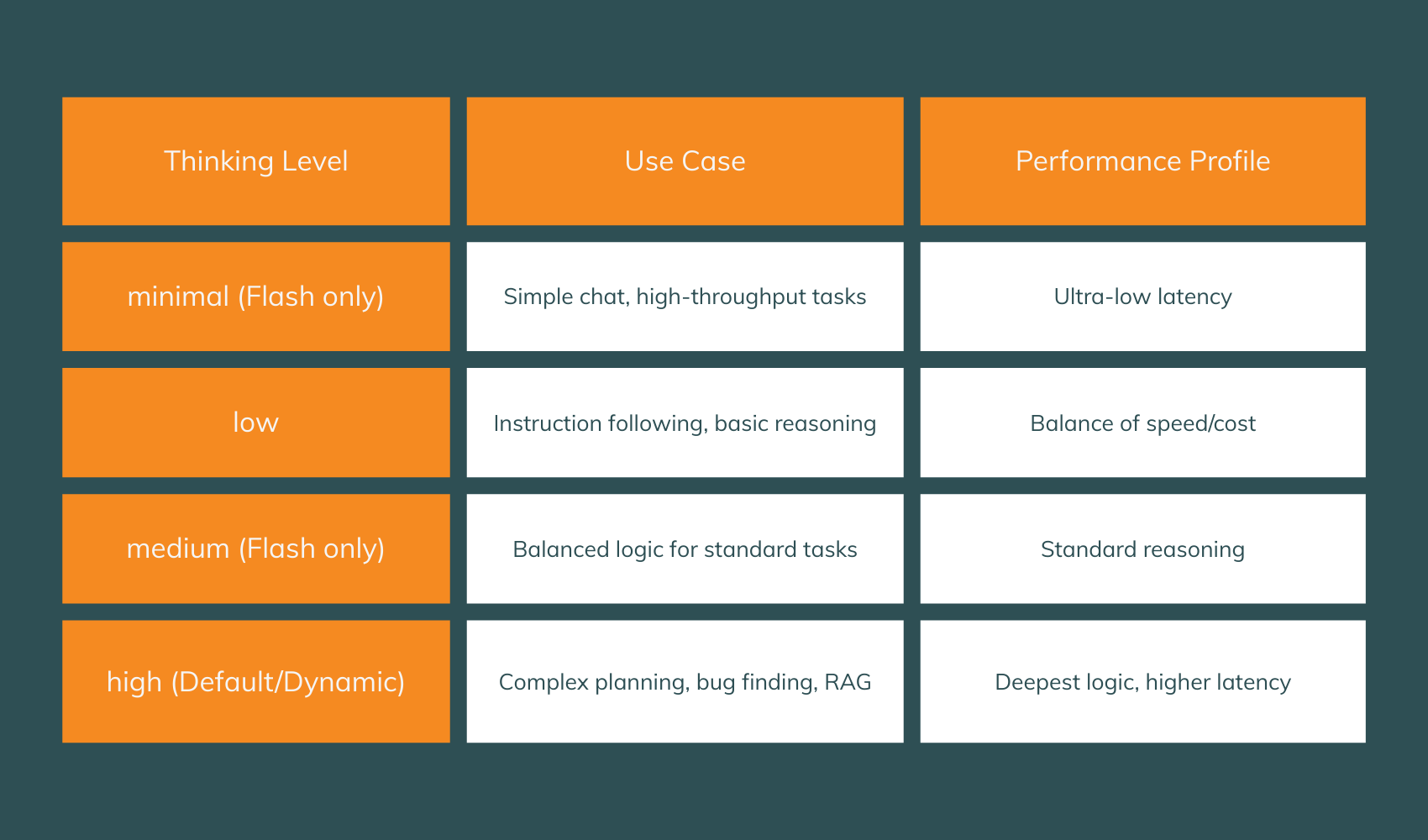

Native Reasoning and Thinking Levels

A transformative feature of Gemini 3 is the thinking_level parameter. This allows you to control the depth of the model's internal reasoning process on a per-request basis.

We recommend moving away from complex "Chain of Thought" (CoT) prompting techniques and instead leveraging the high thinking level to handle reasoning depth natively.

Thought Signatures: Solving the "Reasoning Drift" Problem

In multi-step workflows, maintaining a consistent "train of thought" has historically been difficult. Gemini 3 solves this with Thought Signatures, encrypted representations of the model's internal reasoning.

When an agent calls a tool (e.g., check_flight), the API returns a signature <Sig_A>. When your code sends the tool output back to the model, you must include that signature. This ensures the model remembers its precise internal logic when it proceeds to the next step (e.g., book_taxi).

Failure to return these signatures in Gemini 3 Pro and Flash results in a 400 validation error, ensuring that production agents cannot "drift" into hallucination during multi-step execution as usually observed before. You can find a great introduction to implementing thought signatures in this notebook.

Protocols for the Agentic Web: A2A, MCP, and UCP

In 2026, we are moving from an "interface-based economy" to a "protocol-based economy". For agents to be truly effective, they must be able to communicate across framework and organizational boundaries.

Agent2Agent (A2A): The Universal Language

The A2A protocol is an open standard that allows agents built on different frameworks (e.g., ADK, CrewAI, LangGraph) to communicate securely. It treats agents as "opaque" entities, meaning they can collaborate without sharing internal memory or proprietary code.

Key components of A2A include:

Agent Cards: Metadata files (similar to Model Cards) that describe an agent's skills, endpoint, and auth requirements.

Tasks: Standardized objects representing a unit of work, progressing through states like submitted, working, and completed.

Artifacts: Immutable results (documents, images) produced by an agent that can be shared with the requester.

Model Context Protocol (MCP) and gRPC

Google Cloud now offers fully-managed, remote MCP servers for its most popular services, including BigQuery and Google Maps. This allows your agents to "see" real-time geospatial data or reason over enterprise data without moving large datasets into the context window.

For enterprise-grade performance, Google has introduced gRPC as a transport for MCP. This provides:

Full-duplex bidirectional streaming: Allowing the agent and the tool to send continuous data streams simultaneously.

Resiliency: Built-in deadlines and timeouts prevent a single unresponsive tool from causing a system-wide failure in a multi-agent workflow.

Universal Commerce Protocol (UCP)

Announced at NRF 2026, the UCP is essentially the "HTTP of e-commerce". It abstracts the complexity of commercial negotiation, allowing an agent to execute a purchase without knowing the internal API schema of the merchant. UCP defines a state machine for transactions, moving from product discovery to cart management and checkout without the user ever leaving the AI interface (like AI Mode in Search or Gemini).

Security and Governance: Managing the Digital Workforce

As agents gain autonomy, they become "first-class citizens" in your cloud environment. This requires a robust security posture.

Native Agent Identities and SPIFFE

Google Cloud now supports Native Agent Identities. Instead of using generic service accounts, each agent has its own unique principal tied to its resource path. These identities are based on the SPIFFE standard, and Google auto-provisions x509 certificates for secure, certificate-based authentication between agents.

This allows for granular IAM policies:

Bash

# Granting an agent least-privilege access to a BigQuery dataset

gcloud projects add-iam-policy-binding PROJECT_ID \

--member="principal://agents.global.org-123/namespace/my-agent-id" \

--role="roles/bigquery.dataViewer"

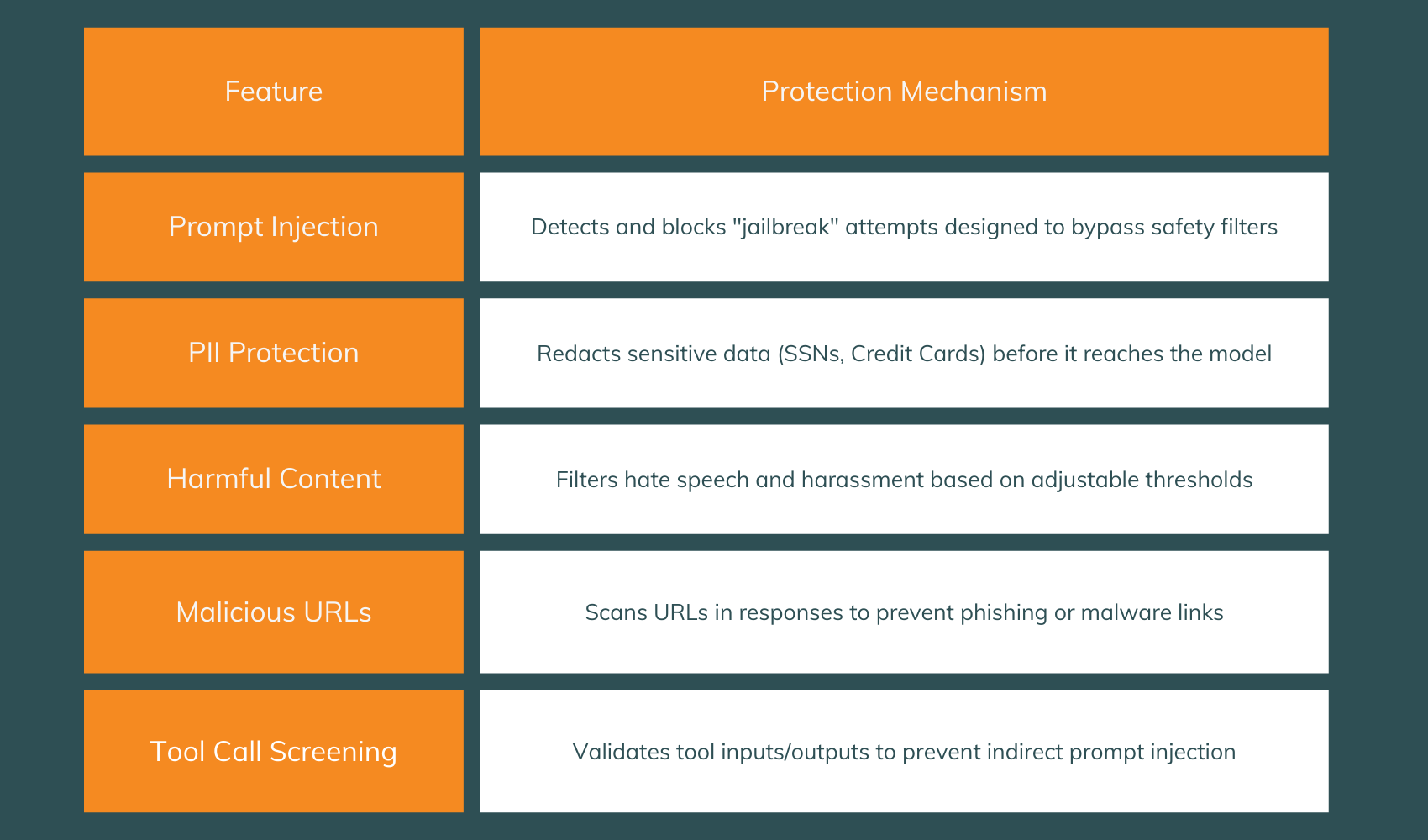

Model Armor: The AI Firewall

Model Armor provides a critical layer of protection by screening all agent interactions. It works by applying configurable "templates" to both incoming prompts and outgoing responses.

Scaling in Production: Vertex AI Agent Engine

Once an agent is built using the ADK, it must be deployed to the Agent Engine (AE) runtime. The AE removes the complexity of managing infrastructure, providing a serverless environment that scales seamlessly with demand.

Observability and Monitoring

Monitoring non-deterministic systems is a major challenge. The Agent Engine provides a dedicated dashboard to track:

Token Consumption and Throughput: Essential for cost management.

Latency and Error Rates: Critical for maintaining a good user experience.

Execution Traces: A "traces" tab allows you to visualize the exact sequence of steps and tool calls an agent took, making it easy to debug complex logic failures.

The Evaluation Layer

Google has introduced an Evaluation Layer that includes a User Simulator. This allows you to simulate thousands of customer interactions to test your agent's reliability and brand alignment before it ever goes live.

Case Study: Gemini Enterprise for Customer Experience (CX)

A prime example of these technologies in action is the Gemini Enterprise for CX solution. Designed for retailers like Kroger and Lowe's, it unifies sales and service into a single "agentic commerce journey".

Multimodal Troubleshooting: A customer can share a video of a leaking faucet. The CX agent uses Gemini 3's visual processing to identify the part, check local inventory, and offer an immediate replacement—all while staying within the brand's voice.

Active Problem Solving: If a shipment is delayed, the agent doesn't just provide a link; it can actively resolve the fulfillment error and issue a real-time refund or credit.

Auto-Scoring: A new QA feature automatically scores customer conversations using conditional scorecards, ensuring that agents (both AI and human) are graded fairly based on context.

Conclusion: Partnering for the Agentic Leap

The transition to AI agents in 2026 is not a minor iteration; it is a fundamental shift in how businesses operate. The combination of Gemini 3’s reasoning, the ADK’s orchestration, and Agent Engine’s scale provides a robust, enterprise-ready stack for any organization looking to automate complex workflows.

However, the "speed-to-value" gap is real. As a Google Cloud Premier Partner, happtiq specializes in bridging this gap. We help you navigate the complexities of multi-agent architectures, secure your agentic workflows, and integrate proprietary data.

Ready to start your journey?

Whether you are interested in a GenAI Discovery Workshop to identify high-impact use cases or a Cloud Migration Assessment to prepare your infrastructure for the agentic era, our team is here to help. Contact us today to turn your autonomous ambitions into production reality.